SAEBER: Sparse Autoencoders for Biological Entity Risk

What do protein model brains know about the virulence of pathogens?

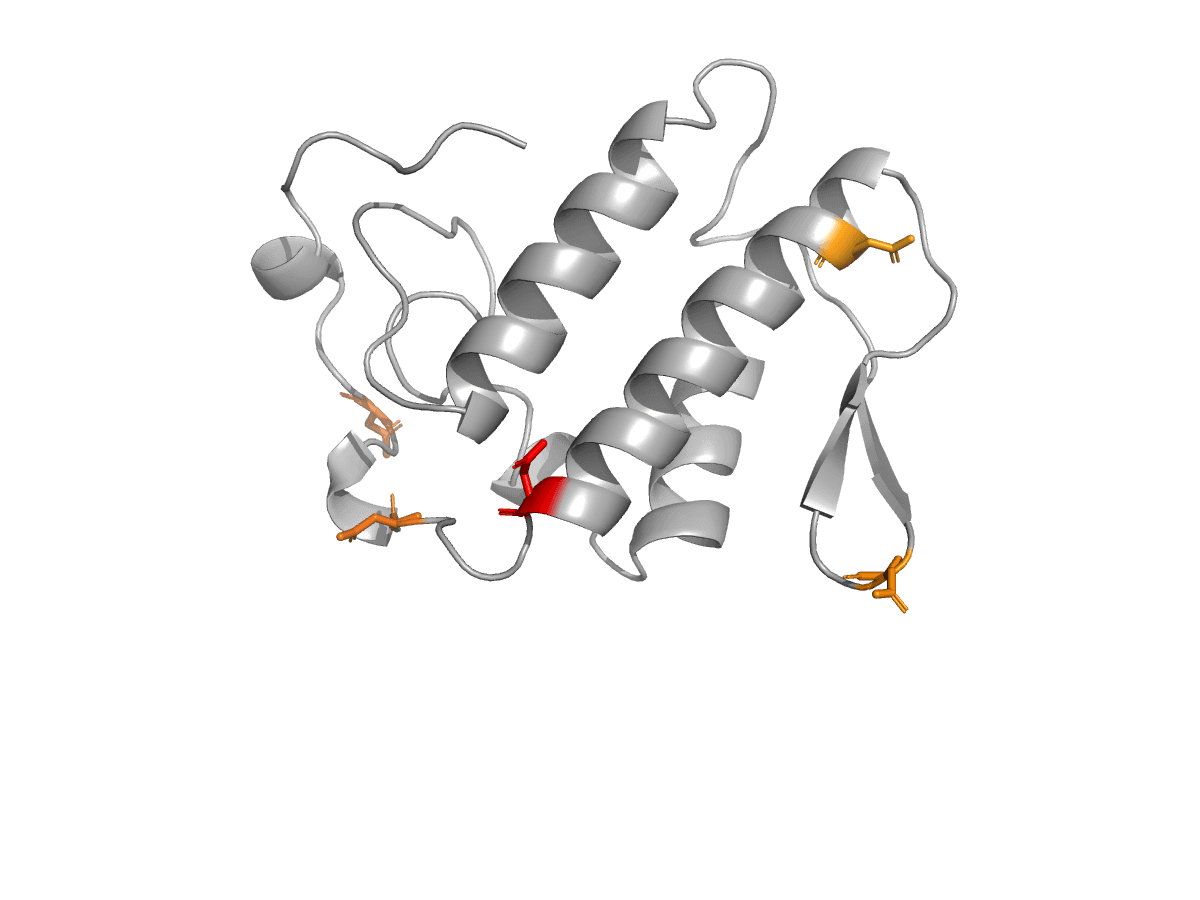

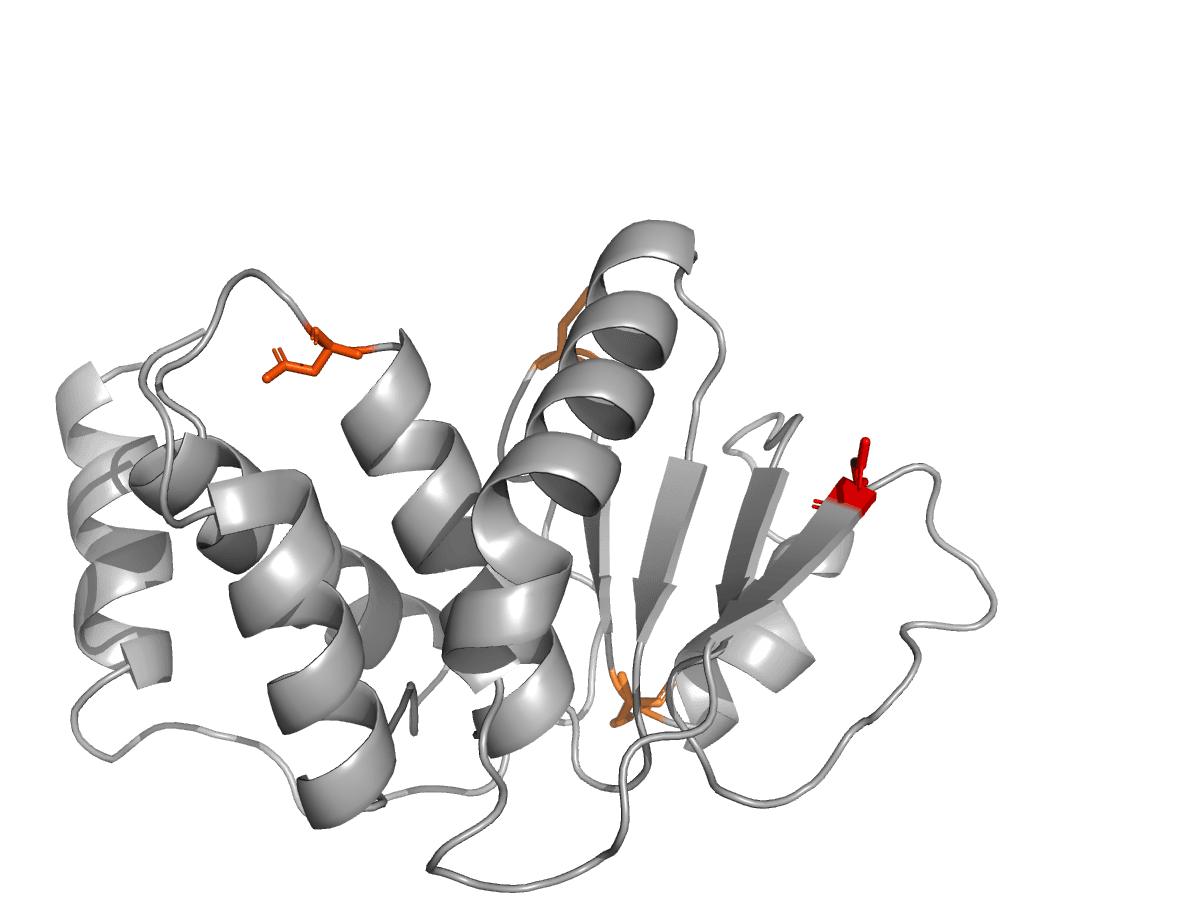

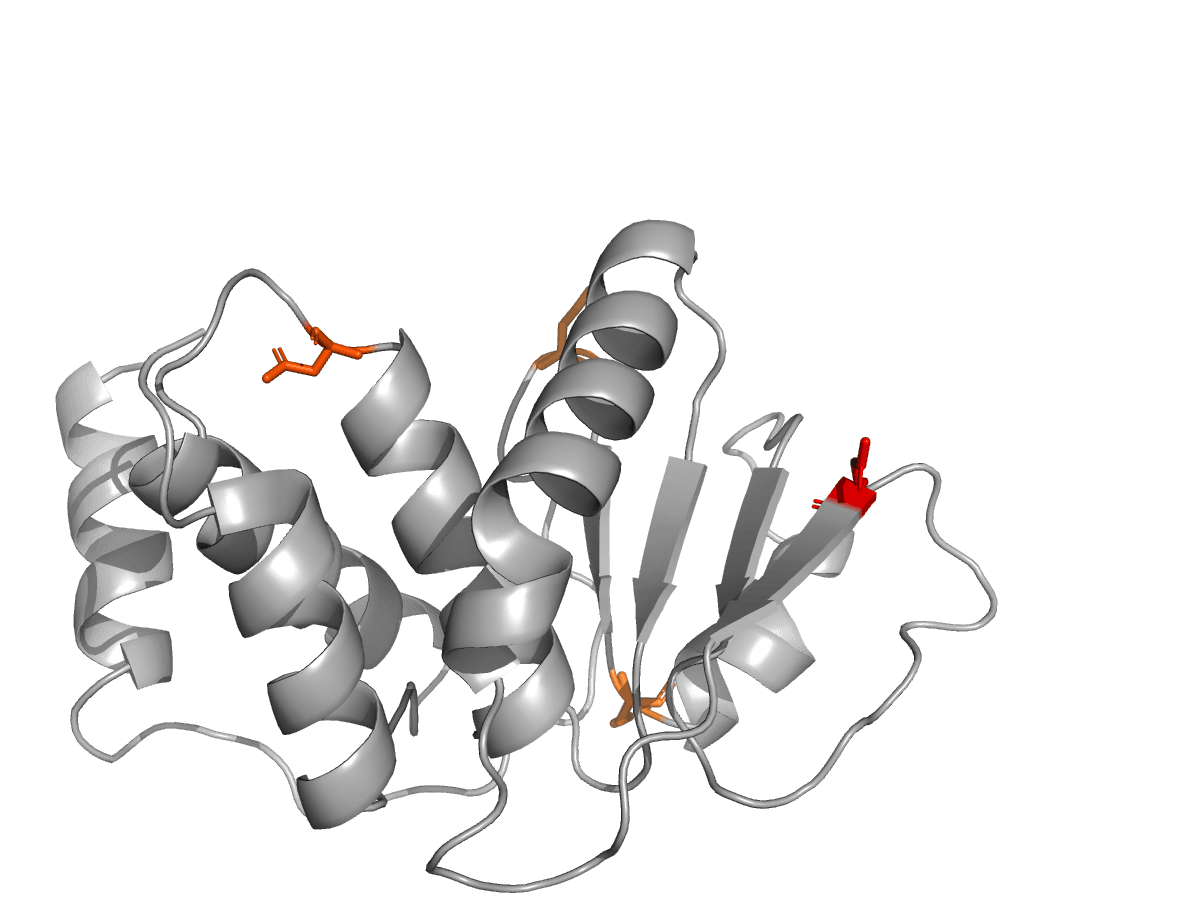

We trained Sparse Autoencoders (SAEs) and Logistic Regression classifiers on activations (intermediate calculations) from RF3 and RFDiffusion3 , leading open source protein folding and design models. Then we asked them to classify viral hazards in SafeProtein from length-matched UniProt benigns, and tell us which SAE features are correlated with viruses and toxins.

We also controlled for paralog memorization with homologous clustering using mmSeq2 and sequence length, just in case.

Explore the data and features below!